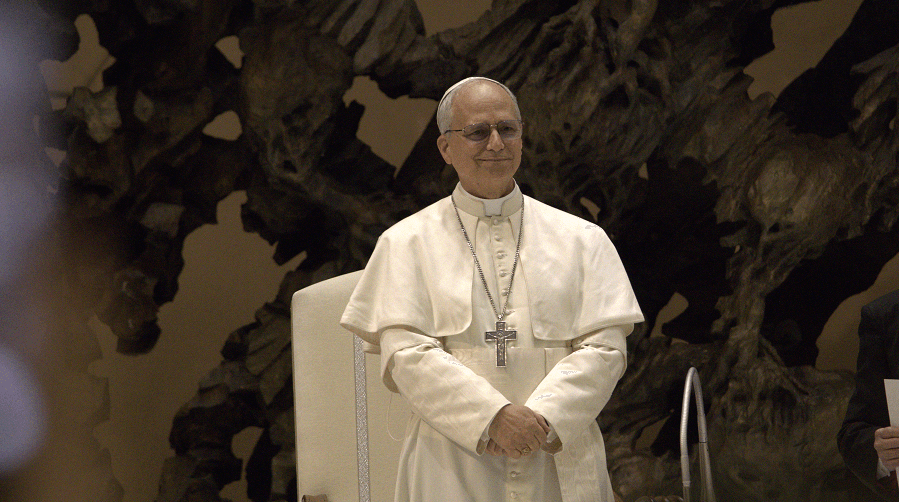

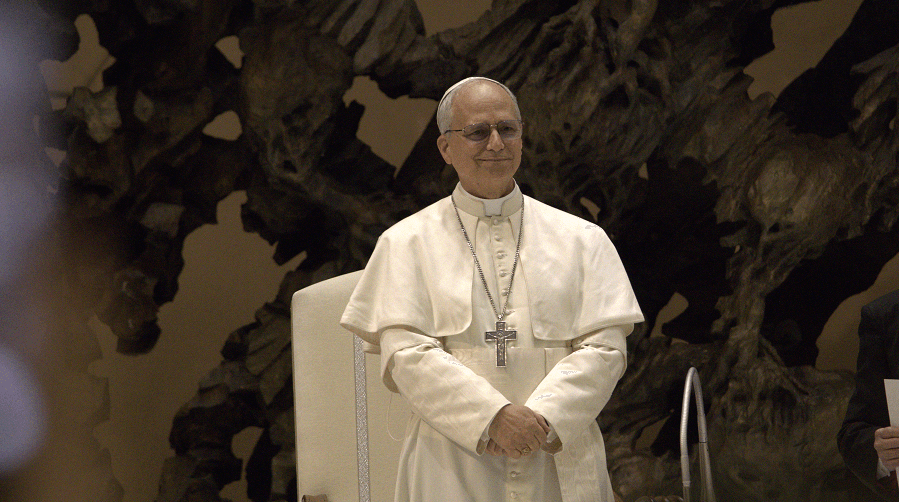

Pope Leo XIV's Encyclical Warns of AI's Human Impact

In a bold move, Pope Leo XIV's encyclical warns about AI's potential risks, urging society to consider the ethical implications as technology evolves.

In a bold move, Pope Leo XIV's encyclical warns about AI's potential risks, urging society to consider the ethical implications as technology evolves.

Huawei introduces a new chip design framework and Tau Scaling Law, aiming to rival semiconductor giants like TSMC and Nvidia. Will this reshape tech industries worldwide?

Pope Leo XIV's recent encyclical emphasizes AI's ethical and societal impacts, urging regulations to prevent concentrated power abuse. He calls for AI to serve humanity, not profit.