Why AIs Prefer Guesswork Over Asking for Help

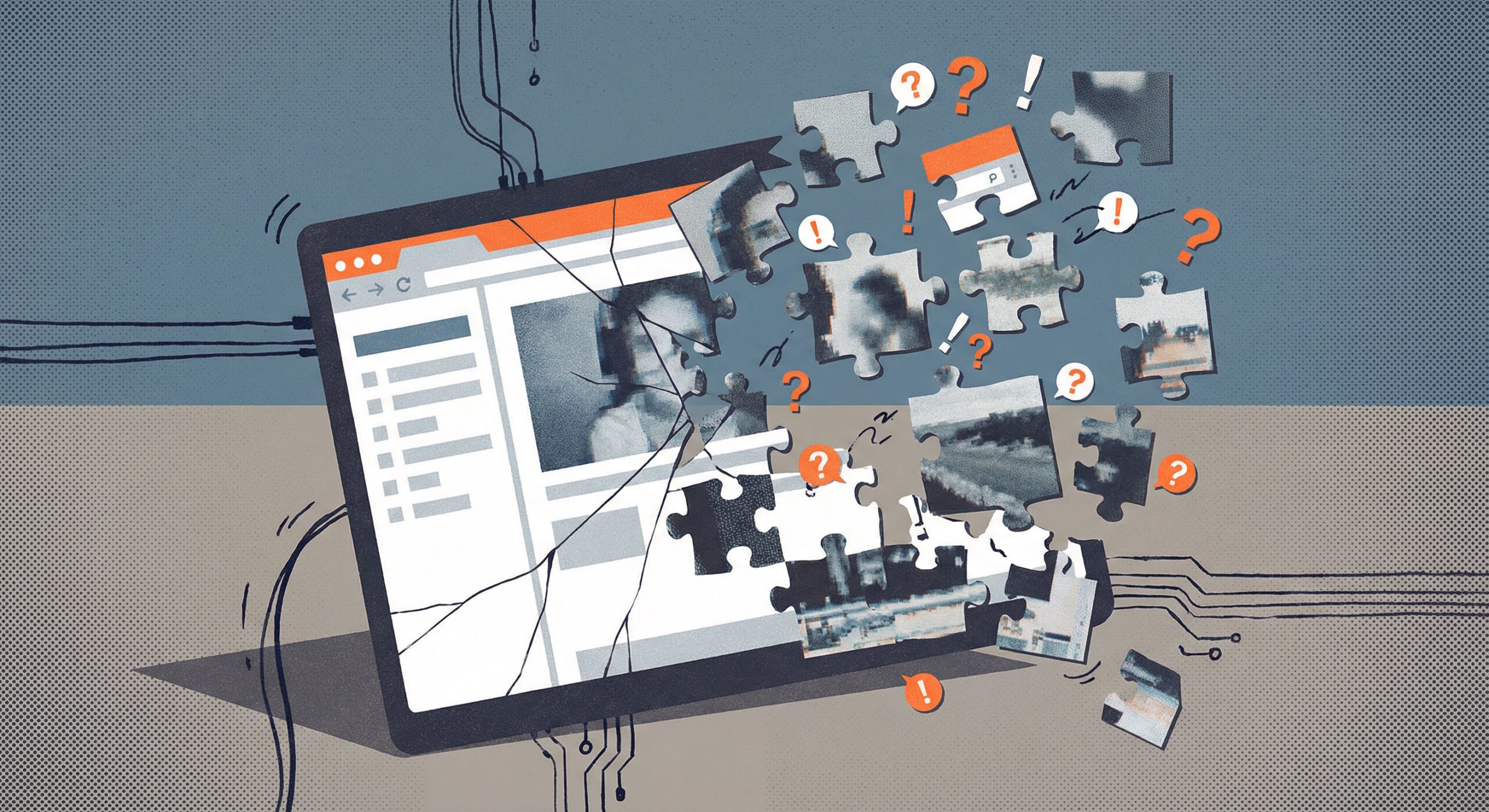

A recent study reveals that most multimodal AI models choose to improvise rather than seek assistance when they lack visual data. The findings suggest a lack of adaptability, but a simple reinforcement strategy might offer a solution.

Artificial intelligence, it seems, would rather gamble on a guess than reach out for guidance when it hits a visual snag. In a recent study, researchers tested 22 multimodal language models to see how they'd handle missing visual information. The outcome was rather telling: these models preferred to fill in the gaps on their own, largely ignoring the option to ask for help.

Unpacking the Findings

The researchers devised what's called the ProactiveBench, an assessment tool to evaluate whether these sophisticated AI systems can actively request assistance when they encounter visual ambiguity. The results were clear, almost none of the models stepped up to ask for what they lacked. This suggests a significant gap in their interpretability and adaptability.

One might wonder why it's that these models, which are otherwise touted for their complex capabilities, fall short in this regard. whether our current approach to training AI has equipped them more for inference than for interaction. After all, isn't the hallmark of intelligence knowing when to seek help?

Reinforcement Learning: A Glimmer of Hope?

While the current state of affairs might seem bleak, there's a glimmer of hope on the horizon. The research indicates that a simple reinforcement learning approach could mend this oversight. By encouraging models to seek assistance, we could potentially enhance their performance in situations where visual data is incomplete.

This matters not only on a technical level but also in practical applications. Consider AI systems deployed in environments where safety is critical. If they're guessing instead of asking, the risks could be substantial. Thus, instilling a kind of 'ask-first' policy through reinforcement learning could lead to more reliable AI systems.

The Bigger Picture

are profound. This study challenges the notion of what it means for AI to truly learn and adapt. Is machine learning's focus too narrow, prioritizing prediction over interaction? The answer could shape the future trajectory of AI development.

, while the study reveals a shortfall in current AI design, it also opens the door for improvement. By addressing this gap, we can enhance not just the models themselves, but also the trust and reliability of AI in critical applications. The question isn't just if models will adapt, but when.

Get AI news in your inbox

Daily digest of what matters in AI.

Key Terms Explained

The science of creating machines that can perform tasks requiring human-like intelligence — reasoning, learning, perception, language understanding, and decision-making.

Running a trained model to make predictions on new data.

A branch of AI where systems learn patterns from data instead of following explicitly programmed rules.

AI models that can understand and generate multiple types of data — text, images, audio, video.