The US-China AI Rivalry: A Tighter Race Than You Think

The once clear-cut US lead in AI is now a neck-and-neck race with China, according to Stanford's latest AI Index Report. While model performance gaps shrink, safety benchmarks lag behind.

The notion that the United States maintains an unassailable lead in artificial intelligence is increasingly outdated. Stanford University's 2026 AI Index Report reveals that the US-China divide in AI model performance has effectively closed. This isn't just a technicality. it's a significant shift for global tech dynamics.

Model Performance: A Tightening Race

The report notes that US and Chinese AI models have been neck-and-neck since early 2025, frequently trading top spots. For instance, in February 2025, China's DeepSeek-R1 briefly matched the best US model. As of March 2026, the US's Anthropic model leads by a mere 2.7%.

While the US still churns out more top-tier models, 50 in 2025 compared to China’s 30, China takes the lead in publication volume, citation share, and patent grants. China's share of the top 100 most-cited AI papers jumped from 33 in 2021 to 41 in 2024. South Korea stands out too, boasting the highest AI patents per capita.

What does this mean for the US? The comfortable technological lead it enjoyed is eroding, emphasizing that assumptions of a durable lead now look increasingly flimsy.

AI Safety: Lagging Behind

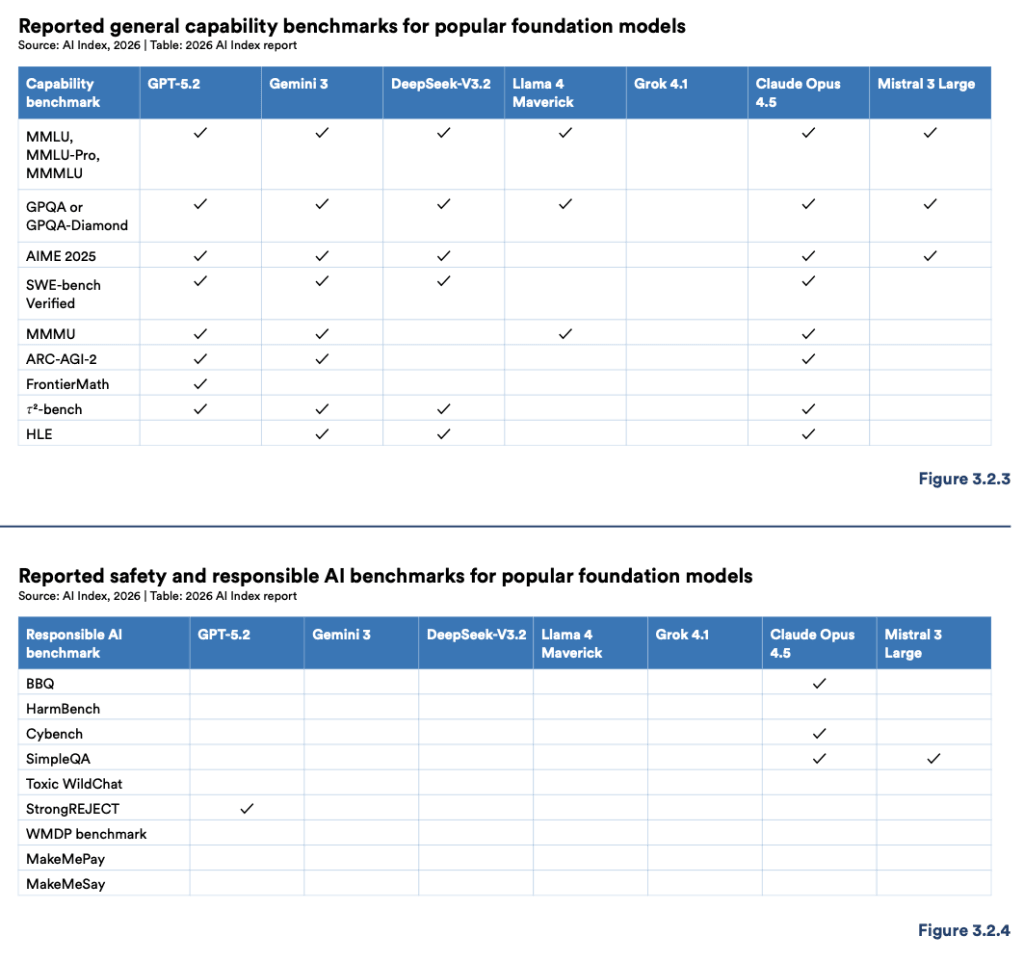

While the competition in model performance is fierce, AI safety benchmarks are sorely lacking. Almost all major developers highlight their abilities but fall short on responsible AI measures. Only Claude Opus 4.5 reports on more than two safety benchmarks, while GPT-5.2 stands alone in StrongREJECT reporting.

Despite internal safety efforts like red-teaming and alignment testing, these aren't transparently benchmarked or comparable across models. The lack of standardized metrics makes safety assessments virtually impossible for external reviewers. With AI incidents rising, from 233 in 2024 to 362 in 2025 according to the AI Incident Database, it's a important area yet to receive adequate attention.

Why isn't more being done to bridge this gap? The gap between pilot and production is where most fail, and AI safety seems stuck in a perpetual pilot phase.

Public Trust and Regulatory Challenges

Public sentiment compounds these challenges. While global adoption of AI climbs, so does public concern. According to the report, 59% of people now believe AI's benefits outweigh its drawbacks, up from 55% in 2024. Yet, 52% express nervousness about AI products, reflecting a growing unease even as usage expands.

The expert-public divide is most glaring concerning AI's impact on employment, with 73% of AI experts optimistic versus only 23% of the general public. This gap could shape regulatory environments, as public trust, or the lack thereof, directly influences policy-making. Notably, the US reports the lowest confidence in its ability to regulate AI responsibly, at just 31% compared to a global average of 54%.

Why should enterprises care? Enterprises don't buy AI. They buy outcomes. And the outcome of ignoring public and safety concerns could be stringent regulations that disrupt business as usual.

Get AI news in your inbox

Daily digest of what matters in AI.

Key Terms Explained

The broad field studying how to build AI systems that are safe, reliable, and beneficial.

An AI safety company founded in 2021 by former OpenAI researchers, including Dario and Daniela Amodei.

The science of creating machines that can perform tasks requiring human-like intelligence — reasoning, learning, perception, language understanding, and decision-making.

A mechanism that lets neural networks focus on the most relevant parts of their input when producing output.