How Machines Are Shaping the Future Battlefield

Lockheed Martin's CTO envisions a future where humans and AI systems team up on the battlefield. As autonomous weapons gain traction, accountability and decision-making processes are under scrutiny.

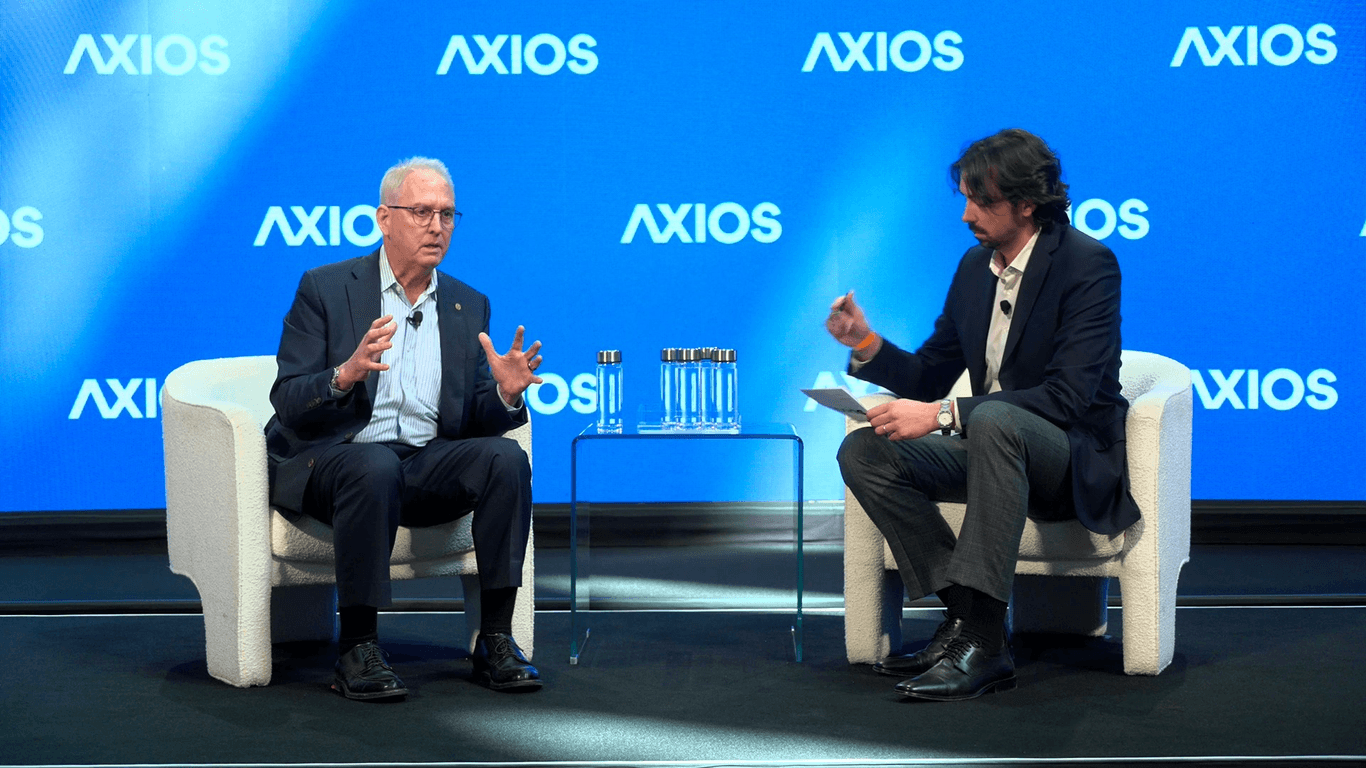

Lockheed Martin’s chief technology officer, Craig Martell, envisions a battlefield where humans and machines operate as a cohesive unit. At the recent AI+DC Summit, Martell emphasized that the future of warfare depends on effective human-machine collaboration.

The Need for Human-Machine Teaming

According to Martell, simply relying on statistical models at scale won’t create truly cognitive machines. The emphasis should be on crafting a partnership between human operators and AI systems. The question isn’t just about trust, but accountability. Who takes the fall if an autonomous system errs? Martell believes this responsibility lies with the human in charge of training and deploying the AI.

“I choose to use it,” Martell stated. “And if it gets it wrong, my fault.” This reflects a tangible shift in military strategy. It's not just about deploying autonomous weapons, but ensuring that the human agents understand the AI’s limitations and potential errors.

A New Era for Military Hardware

Take the Army’s recent acquisition of an autonomous-ready Black Hawk helicopter. Developed with a Lockheed Martin subsidiary, this chopper can execute missions independently or under remote supervision. It's currently undergoing rigorous testing. This marks a significant step toward integrating autonomous systems into military operations, aligning with the broader trend of drone warfare and unmanned vehicles.

Why is this important? Because the collision between advanced AI and military hardware isn't just theoretical anymore. With these developments, the AI-AI Venn diagram is getting thicker. The military's pivot toward autonomy could redefine warfare dynamics. If agents have wallets, who holds the keys?

Beyond Technology: The Ethical Dimension

As the military leans into AI, ethical considerations can't be overlooked. The potential for autonomous weapons to make life-and-death decisions without human intervention raises moral questions. How do we ensure these systems align with human values and legal standards? Martell's vision of human-machine teaming suggests a path forward, but it demands rigorous oversight.

The Pentagon’s investment in AI isn’t just about gaining a tactical edge. It’s about setting a precedent for responsible AI use in warfare. The compute layer needs a payment rail, but it also requires an ethical roadmap. As the military's AI advantage over nations like China grows, it’s key that development doesn’t outpace oversight.

In an era where machines are becoming integral to combat strategies, the line between advantage and autonomy blurs. The implications of these advancements extend beyond technology, demanding a broader conversation about the future of warfare.

Get AI news in your inbox

Daily digest of what matters in AI.

Key Terms Explained

The processing power needed to train and run AI models.

The practice of developing and deploying AI systems with careful attention to fairness, transparency, safety, privacy, and social impact.

The process of teaching an AI model by exposing it to data and adjusting its parameters to minimize errors.