Gemma 4: A New Contender in AI's Open-Weight Arena

Google DeepMind's Gemma 4 challenges the AI open-weight landscape with its Apache 2.0 models, offering a practical U.S. alternative to Chinese giants.

This week, Google DeepMind shook up the AI world with the release of Gemma 4. It's the most significant U.S.-based open-weight model we've seen in a while. While China has been dominating the open-weight scene, especially with its massive Mixture-of-Experts (MoE) models, Gemma 4 introduces a fresh contender from across the Pacific.

A Welcome U.S. Alternative

The Gemma 4 series brings a solid Apache 2.0 licensed family to the market. It's aimed at those who still want to run models on local hardware or within more contained enterprise boundaries. But here's the twist: the appetite for self-hosting is shrinking. Anthropic's recent announcement of a $30 billion run-rate, skyrocketing from $1 billion in December 2024, signals a shift. More clients are choosing large language model APIs over local deployments.

Gemma 4 isn't aiming to wipe the slate clean of Chinese dominance immediately. Instead, it offers a credible U.S. alternative for businesses concerned with compliance and data control, especially in regulated sectors.

Technical Specs and Market Impact

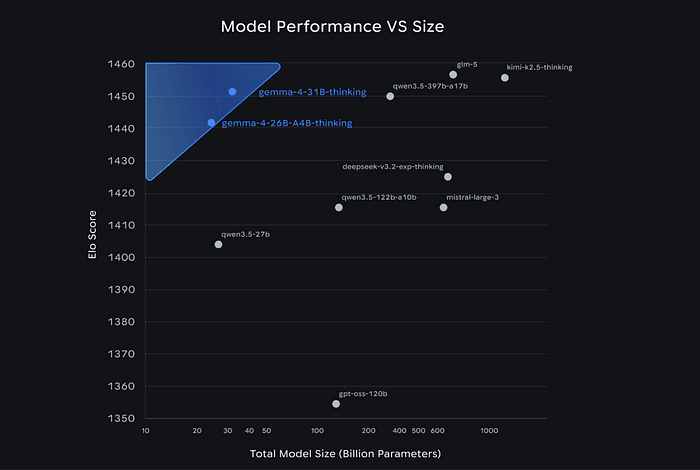

Google launched four Gemma 4 variants: two edge models (E2B and E4B), a 31B dense flagship, and a 26B A4B MoE designed for higher-throughput tasks. The 31B, in particular, impresses with its benchmark scores, such as 1,452 on Arena AI text and 86.4% on Tau2-bench retail.

The architecture of Gemma 4 is intentionally conservative, which could be its strength. It relies more on refinement through reinforcement learning and data than drastic architectural changes. The larger models cater to consumer GPUs and single H100 deployment, making them attractive for practical use in edge devices and air-gapped environments.

Why Gemma 4 Matters

Why should we care about Gemma 4? It's simple. It reshapes the open-weight market. While Chinese labs have produced remarkable trillion-parameter models, Gemma 4 offers a U.S.-origin alternative that's easier to deploy and more compliant with Western standards. For teams needing control over data and tuning flexibility, Gemma 4 provides a real option.

Yet, with Anthropic's success, the broader market seems to be veering towards hosted solutions. Does this narrow the role of open weights? Perhaps, but it also clarifies their purpose. Open models should focus on use cases where locality and control matter more than staying at the cutting edge of capability.

the AI engineering landscape is moving away from fine-tuning smaller models to compete with strong out-of-the-box closed models. Gemma 4 finds its niche by offering an adaptable, self-sufficient alternative where it counts. Africa isn't waiting to be disrupted. It's already building with tools like these.

Get AI news in your inbox

Daily digest of what matters in AI.

Key Terms Explained

An AI safety company founded in 2021 by former OpenAI researchers, including Dario and Daniela Amodei.

A standardized test used to measure and compare AI model performance.

A leading AI research lab, now part of Google.

The process of taking a pre-trained model and continuing to train it on a smaller, specific dataset to adapt it for a particular task or domain.