Epic Code Leak: How Anthropic's Security Fumble Exposed AI Secrets

A single misstep exposed Anthropic's ambitious AI project, revealing 512,000 lines of TypeScript. The incident raises urgent questions about AI security and ethics.

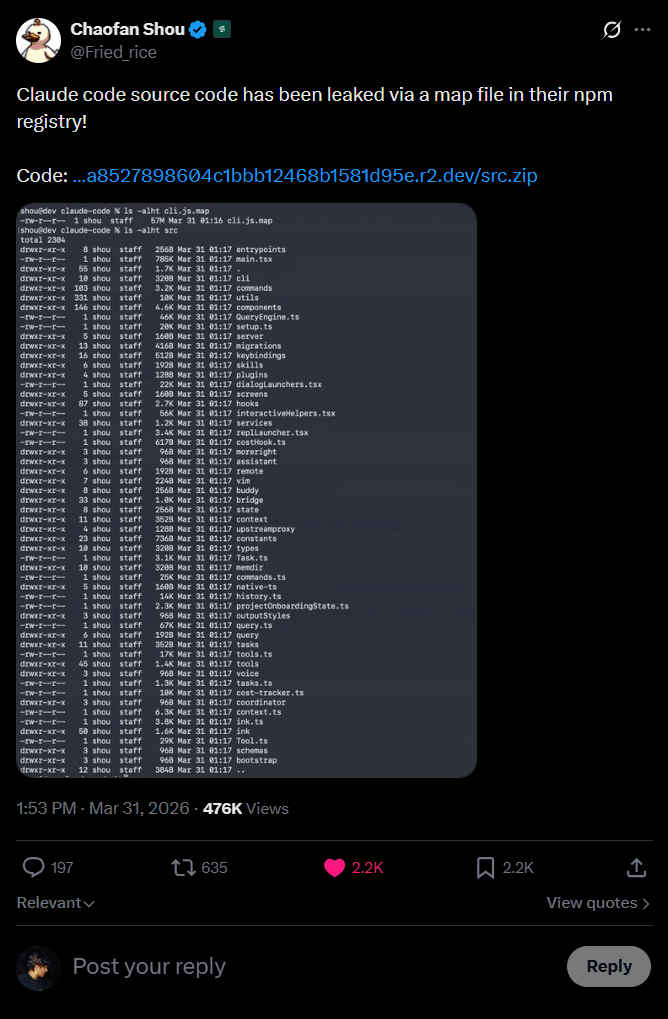

In a striking revelation that underscores the fragility of digital security, Anthropic's Claude Code, comprising over 512,000 lines of TypeScript, was laid bare due to a glaring oversight, a missing line in a configuration file. The leak, which occurred in the early hours of March 31, 2026, is more than just a technical snafu. It's a wake-up call for the AI community.

The Code and Its Secrets

This massive trove of code isn't just mundane lines of software. It's a window into Anthropic's ambitious attempts to blend AI with human interaction. Among the standout features are a virtual pet and an autonomous assistant, concepts that blur the line between utility and companionship. Such innovations speak volumes about the direction AI is heading, but they also raise alarms about privacy and control.

Security Implications

The leak exposes significant security vulnerabilities. If a missing line can cause this much damage, what other weaknesses lie unnoticed in complex AI systems? This incident is a stark reminder of the necessity for rigorous checks and balances in software development. Companies must ask themselves: Are we doing enough to safeguard our digital assets?

Ethical Concerns and Industry Impact

Beyond the technical fallout, the ethical considerations loom large. The potential misuse of such powerful technology is concerning. Those in AI development need to reckon with not only the capabilities of their creations but the moral responsibilities that come with them. The conversation around AI ethics is no longer theoretical, it's urgent and real.

the competitive landscape of AI development will likely feel the ripple effects. In an industry where proprietary advancements can dictate market dominance, the inadvertent release of such information could tilt the balance. How will competitors respond, and what measures will companies implement to prevent similar incidents in the future?

The Road Ahead

Ultimately, this isn't just about Anthropic's misstep. It's a broader commentary on the industry. AI developers and researchers must prioritize security and ethics as foundational pillars, not afterthoughts. While the allure of pushing AI boundaries is undeniable, it should never overshadow the imperative to protect and ethically guide such innovations.

Get AI news in your inbox

Daily digest of what matters in AI.