Cracking the Code: Demystifying Large Language Models

Berkeley researchers unveil SPEX and ProxySPEX, groundbreaking tools to interpret complex AI systems. Dive into how these algorithms aim to make AI more transparent and reliable.

Understanding what makes large language models (LLMs) tick isn’t just a puzzle for tech enthusiasts. It's a pressing concern for anyone interested in the future of AI. Researchers at Berkeley are tackling this head-on with new tools that promise to reveal the inner workings of these complex systems.

The Complexity Conundrum

LLMs and their intricate behaviors are hard to pin down. They act like black boxes, with their decisions shrouded in mystery. Here’s the kicker: understanding these interactions isn’t just academic. It's essential for creating safer and more trustworthy AI. If you’re wondering why that matters, just ask anyone who's been on the receiving end of an AI glitch.

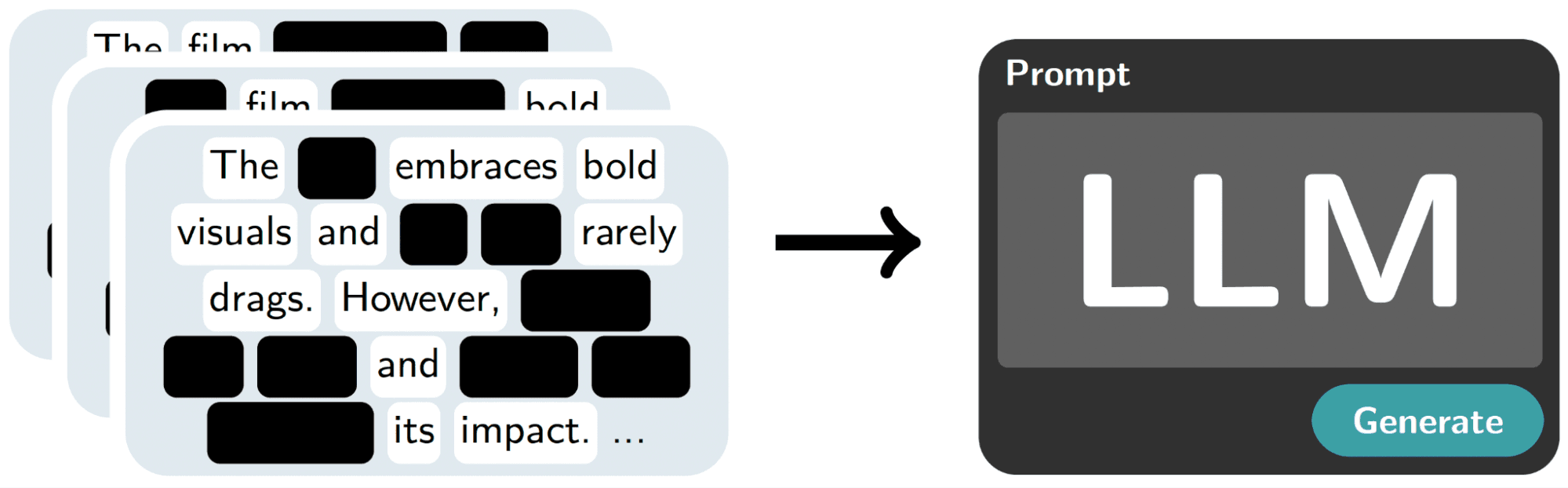

The core of the issue is complexity. LLMs synthesize countless feature relationships and training data points. They're like intricate webs where pulling one thread impacts the whole structure. SPEX and its sibling ProxySPEX come into play by identifying influential interactions within these models, even when they’re operating at massive scales.

Revealing Interactions with SPEX

SPEX, or Spectral Explainer, uses advanced signal processing techniques to highlight which interactions truly matter. It's based on a simple yet powerful principle: while there may be countless potential interactions in a model, only a few have any real impact. This is where the magic of SPEX lies. It narrows down the noise to spotlight these critical interactions.

ProxySPEX adds another layer by introducing the concept of hierarchy. The idea is that if a high-level interaction is important, its smaller components likely matter too. This approach slashes computational costs, achieving similar results with a fraction of the effort. It's like finding a needle in a haystack but knowing exactly where to look.

Why It Matters

So, why should you care about this deep dive into AI’s guts? Because this isn’t just about understanding LLMs. It’s about building AI that respects and responds to human needs. SPEX and ProxySPEX offer a pathway to developing AI that we can trust, systems that are less likely to make inexplicable, potentially harmful decisions.

These tools also shine a light on how AI models make sense of data, which is essential for fields beyond tech. Imagine applying this to genomics or environmental science, where understanding complex interactions can lead to breakthroughs.

The future isn’t about AI dictating outcomes in a black box. It's about making AI that collaborates with us, meeting our needs while explaining its reasoning. In the end, Latin America doesn’t need AI missionaries. It needs better rails to run on. SPEX and ProxySPEX are paving that track.

Get AI news in your inbox

Daily digest of what matters in AI.