Cadence and Nvidia: Elevating AI with Physics-Based Simulation

Cadence Design Systems partners with Nvidia and Google Cloud, focusing on AI-driven physics simulation and cloud-based chip design to enhance robotics and semiconductor design.

Cadence Design Systems is doubling down on AI innovation with new collaborations. At its CadenceLIVE event this week, Cadence announced a deepened engagement with Nvidia, integrating AI with physics-based simulations and accelerated computing for robotics and semiconductor design. This isn't a partnership announcement. It's a convergence.

Nvidia and Physical AI

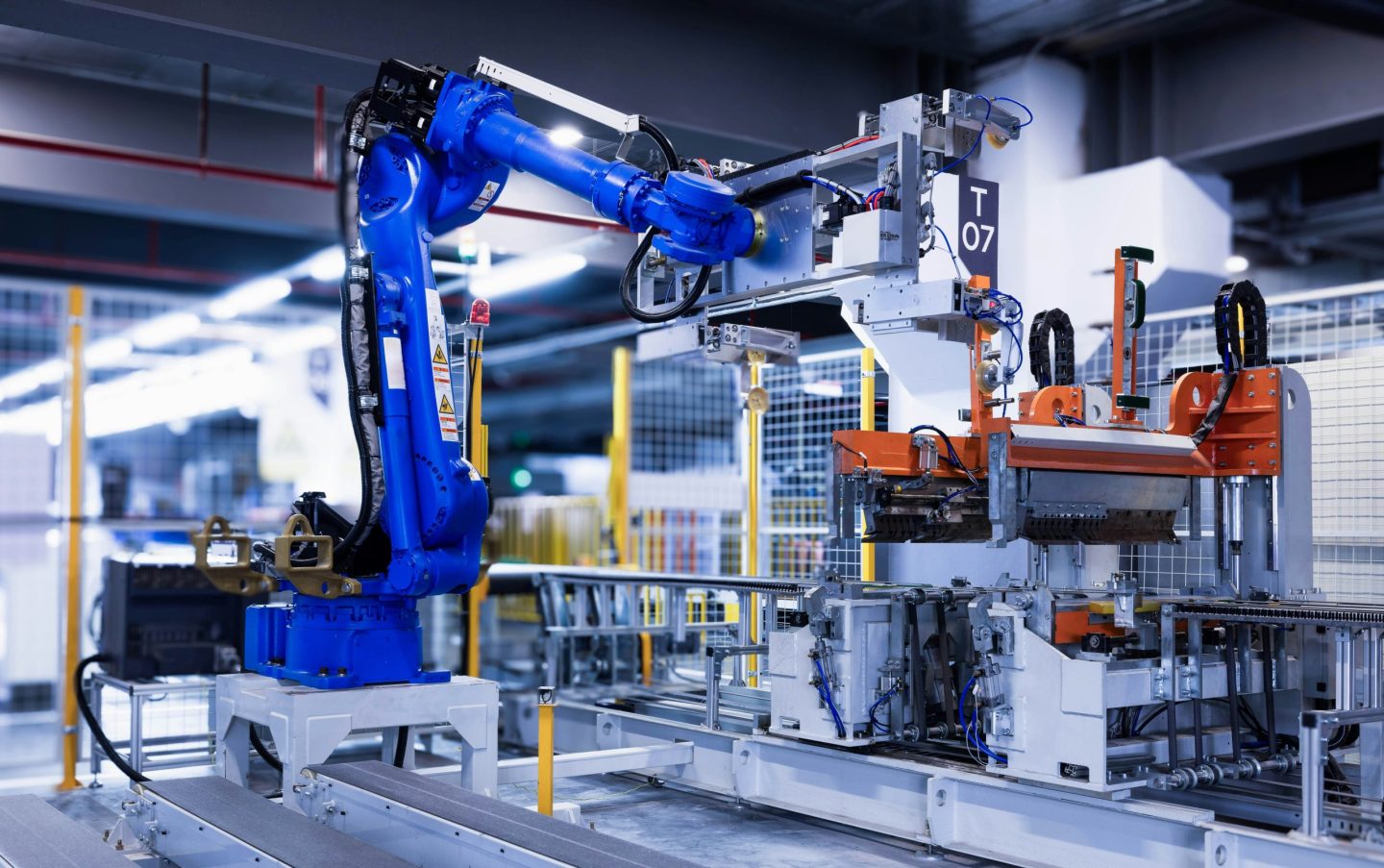

The Cadence-Nvidia collaboration targets modeling and deployment across semiconductors, robotics, and vast AI infrastructures. By integrating Cadence's multiphysics simulation tools with Nvidia’s CUDA-X libraries, AI models, and Omniverse simulation environments, the duo aims to replicate and predict real-world system behavior. Engineers can now evaluate how thermal, electrical, and mechanical interactions affect system performance even before a single robot hits the factory floor.

But why does this matter? The AI-AI Venn diagram is getting thicker. If engineers can simulate and refine systems in a digital environment, the transition to physical deployment can be smoother and more efficient. This could spell the end of costly trial-and-error methods in industrial robotics.

Robotics in a Virtual World

Robotics development is another frontier in this collaboration. By linking Cadence’s physics engines with Nvidia’s AI models, robots can be trained in simulated environments, bypassing the need for extensive real-world data collection. The resulting simulation-generated datasets could be the key to training more accurate AI models for robotics. The compute layer needs a payment rail, and Nvidia's Isaac simulation frameworks coupled with Omniverse-based tools are providing just that.

Consider this: If agents have wallets, who holds the keys? In this context, the 'keys' are the digital environments where these robots learn and evolve. Companies like ABB Robotics, FANUC, and KUKA have taken notice, already integrating these tools into virtual commissioning workflows.

Cadence and Google Cloud: Automating Chip Design

Cadence isn't stopping with Nvidia. It’s also launching an AI agent with Google Cloud to automate later-stage chip design tasks. This AI agent translates circuit designs into silicon implementations, building on an earlier version that handled front-end chip design. The integration with Google Cloud means these complex tasks can be executed without heavy on-premise compute infrastructure.

Cadence claims productivity gains of up to 10 times in early deployments. Yet, there's a lingering mystery: which customers are seeing these gains, and how are they achieving them? Despite the numbers, Cadence remains tight-lipped about specific implementations.

In a separate revelation, Nvidia unveiled its open-source quantum AI models, named NVIDIA Ising, to boost quantum computing practicality. With these models, Nvidia seeks to turn fragile qubits into scalable and reliable quantum-GPU systems. AI, it seems, is poised to be the new control plane for quantum machines.

Get AI news in your inbox

Daily digest of what matters in AI.

Key Terms Explained

An autonomous AI system that can perceive its environment, make decisions, and take actions to achieve goals.

The processing power needed to train and run AI models.

NVIDIA's parallel computing platform that lets developers use GPUs for general-purpose computing.

Graphics Processing Unit.