Bridging the Dexterity Divide: Human-Like Grasping for Robots

RobustDexGrasp aims to close the gap between human and robotic dexterity in grasping tasks. This innovative framework uses a multi-sensory approach to enable robots to handle complex, real-world objects with near-human proficiency.

Look at your hand while you're reading this. It's likely performing tasks with ease, holding a phone or clicking a mouse with grace. The human hand, with its over 20 degrees of freedom, handles tasks from swinging hammers to tweaking tiny screws almost instinctively. For robotic hands, matching this dexterity is a significant challenge, but not an impossible one.

Dexterous Robots: Meeting the Challenge

While many robots today still use basic grippers, the potential of dexterous robot hands is enormous. They can adapt universally, manipulating everything from delicate needles to hefty basketballs, and even execute intricate tasks like rotating keys or using scissors. Yet, the complexity of dexterous manipulation remains a formidable barrier for robotics.

Grasping is a fundamental skill in this area. Without it, robots can't effectively pick up tools or manage complex tasks. The ability to grasp diverse objects reliably is essential for integrating robots further into daily life.

The Complexity of Dexterous Grasping

The path to dexterous robotic grasping is riddled with challenges. First, the control complexity is vast. With each finger affecting the entire grasp, determining optimal movements and force in real-time is incredibly intricate. Imagine deciding which finger moves when, and with how much force, as if you were solving a puzzle.

robots must generalize their grasp strategies across a multitude of shapes, sizes, and materials. This isn't about programming each object type. it's about the system's ability to adapt on-the-fly. And then there's the challenge of perception, relying on single-camera systems introduces uncertainties like depth ambiguities and partial occlusions. Robots need to 'see' and 'understand' objects without detailed 3D models to navigate these challenges effectively.

Introducing RobustDexGrasp: A New Framework

Enter RobustDexGrasp, a framework designed to tackle these issues head-on. Using a teacher-student curriculum for high-dimensional control, it learns ideal strategies in simulation before adapting to real-world disturbances. This approach mirrors how humans learn complex tasks, starting with a guided scenario before encountering real-world variables.

the system uses hand-centric intuition for shape generalization. Rather than memorizing every detail of an object, it focuses on where surfaces are relative to its fingers, it's about knowing where to place your hands to lift a chair, not memorizing its color.

By integrating multi-modal perception, the system combines visual input with proprioception and touch. This way, robots aren't just 'seeing' but are aware of their 'body' and 'touch', just like when you reach out to confirm what you see with your hand.

Real-World Success and Future Potential

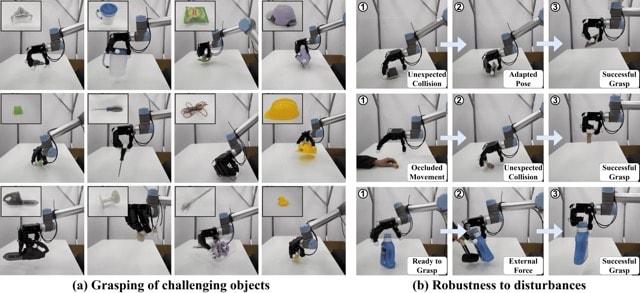

RobustDexGrasp's results are impressive. With a 94.6% success rate on a diverse set of 512 objects, it deftly handles thin boxes, heavy tools, and even transparent bottles. Notably, it maintains a secure grip even under external pressure and dynamically adjusts when objects slip, a significant leap in dexterity.

What's next? The goal is to extend these capabilities to smaller objects and non-prehensile interactions like pushing. As robots close in on human-like dexterity, the possibilities are vast. Will robots soon be as adept at grasping as humans? Perhaps they're closer than we think.

Get AI news in your inbox

Daily digest of what matters in AI.