Anthropic's Claude: Chatbot That Crafts Interactive Visuals

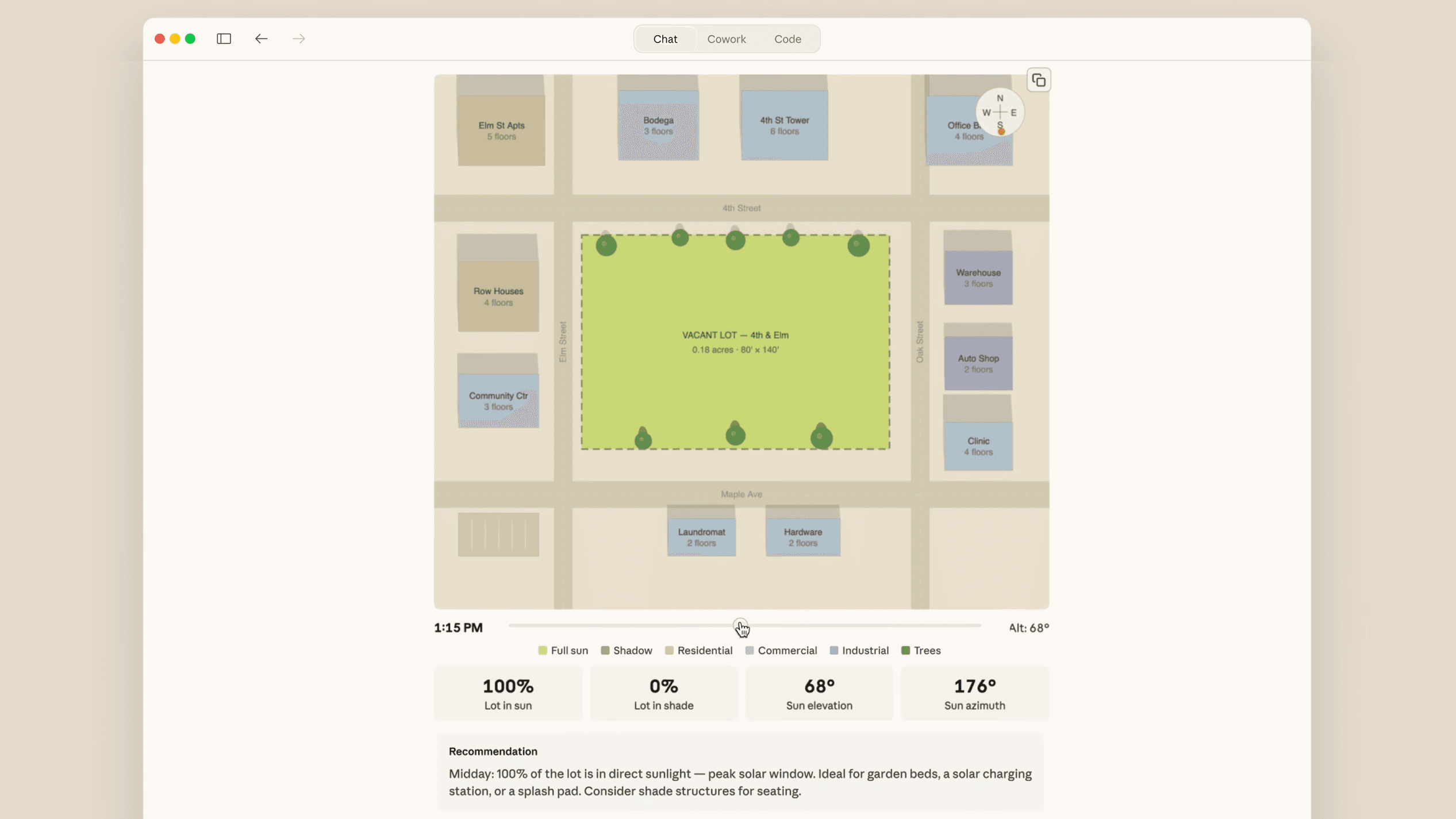

Anthropic's AI chatbot Claude now generates interactive diagrams and visualizations, a feature that could reshape how we interact with data.

Anthropic has rolled out a new beta feature for its AI chatbot, Claude. Users can now generate interactive diagrams and visualizations directly within their chat sessions. This isn't just a tweak, it's a fundamental shift in how AI tools can make possible data interaction.

Visuals Meet AI

Claude's new capability transforms static text interactions into dynamic visual experiences. It's akin to giving words a shape and form, allowing users to engage with data in a more intuitive manner. The question is, who truly understands the implications of making complex data visually interactive at the click of a button?

Consider this: if AI chatbots like Claude can craft visuals on demand, the barrier to entry for data analysis plummets. Suddenly, everyone from novices to experts can play with intricate datasets without needing a background in data science. But does democratizing data visualization dilute the expertise required to interpret it correctly?

Why It Matters

The industry is littered with vaporware claims, but Claude's development marks a tangible step forward. Interactive visuals could redefine how industries use data, enhancing decision-making processes across sectors. Show me the inference costs, though. Only then we'll see if this feature truly democratizes data insight or just adds another layer of complexity.

Let's not kid ourselves, interactive visuals are just the beginning. As AI continues to evolve, the convergence with data visualization will only deepen. We can expect this capability to expand, incorporating more nuanced datasets and offering deeper insights.

The Road Ahead

Anthropic's move signals a trend that others will likely follow. But remember, slapping a model on a GPU rental isn't a convergence thesis. reliable AI tools must prove their worth in practical application, not just on paper. The intersection is real. Ninety percent of the projects aren't.

If the AI can hold a wallet, who writes the risk model? As we tread this new territory, it becomes essential to ask: at what point do we start trusting AI over human interpretation? With Claude's new features, that question becomes ever more pertinent.

Get AI news in your inbox

Daily digest of what matters in AI.

Key Terms Explained

An AI safety company founded in 2021 by former OpenAI researchers, including Dario and Daniela Amodei.

An AI system designed to have conversations with humans through text or voice.

Anthropic's family of AI assistants, including Claude Haiku, Sonnet, and Opus.

Graphics Processing Unit.