Anthropic's AI Battle: Technology Meets Policy

Anthropic is caught in a high-stakes battle with the Pentagon over AI use in warfare. The outcome could shape the future of military AI integration.

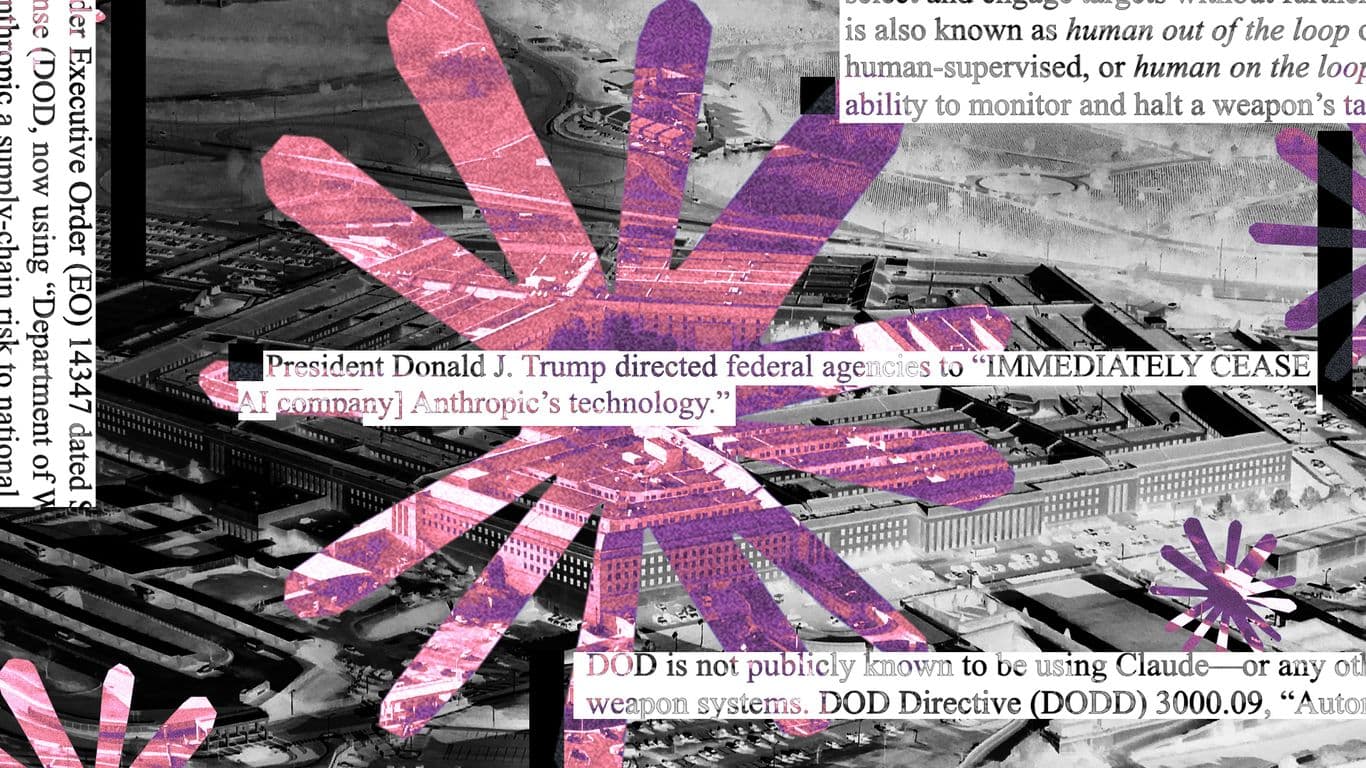

The AI-AI Venn diagram is getting thicker with Anthropic and the Pentagon locked in a heated dispute. The clash centers on Anthropic's refusal to let its AI, notably Claude, participate in autonomous warfare or mass surveillance, despite its potential being unmatched in the field. This refusal marks it as a 'national security supply chain risk' under the Trump administration, jeopardizing contracts worth billions.

The Stakes

Anthropic's AI prowess isn't in question. Insiders suggest its capabilities far outshine competitors like ChatGPT and Gemini, positioning it as a critical asset for the U.S. military, particularly against adversaries like China. Despite this, the Pentagon's demands for unrestricted AI use are a sticking point.

The financial implications for Anthropic are significant. Blacklisting could mean losing tens of billions in upcoming contracts. The compute layer needs a payment rail, and for Anthropic, that might mean conforming to government demands or facing severe financial consequences.

Behind Closed Doors

While Defense Secretary Pete Hegseth stands firm on 'all lawful uses,' there's a quiet acknowledgment within government circles of Anthropic's strategic importance. It's believed that without Claude, the U.S.'s edge over China in AI could diminish rapidly. Some insiders felt a resolution was near before negotiations stalled in March.

Emil Michael, a Pentagon negotiator, had indicated negotiations were close to resolution before publicly denying any ongoing talks, highlighting the complex dynamics at play. The convergence of tech and policy here isn't just a partnership announcement. It's a convergence that could redefine military AI engagement.

The Path Forward

One potential resolution might involve Anthropic agreeing to certain usage conditions in exchange for resumed contracts. There's talk of financial contributions to Trump Accounts, a community investment vehicle, which might grease the wheels for a deal. With imminent AI advancements threatening to upend cybersecurity, the urgency for resolution grows.

Ultimately, the divide between Pentagon's conservative demands and Anthropic's liberal ethos illustrates a broader narrative: the collision of old governance models with new technology paradigms. If agents have wallets, who holds the keys? This question underpins the current struggle as both sides grapple for control over AI's trajectory in national security.

The outcome of this standoff won't only impact Anthropic's future but could also set a precedent for how AI companies navigate similar challenges. As new models emerge, the pressure to strike a deal intensifies, potentially reshaping the financial plumbing for machines and redefining agentic autonomy in military operations.

Get AI news in your inbox

Daily digest of what matters in AI.

Key Terms Explained

An AI safety company founded in 2021 by former OpenAI researchers, including Dario and Daniela Amodei.

Anthropic's family of AI assistants, including Claude Haiku, Sonnet, and Opus.

The processing power needed to train and run AI models.

Google's flagship multimodal AI model family, developed by Google DeepMind.