AI's Double-Edged Sword: Unlocking Cybersecurity Threats

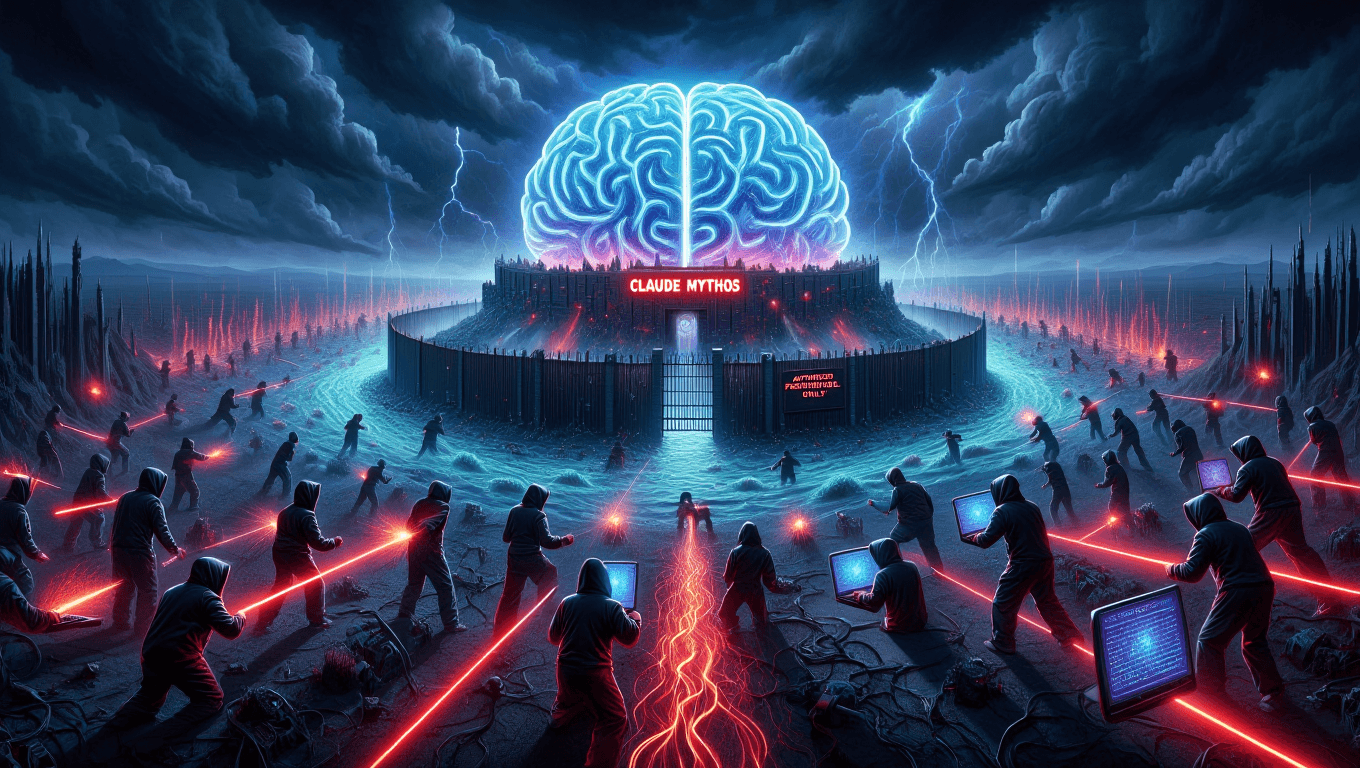

Anthropic's latest AI model, Claude Mythos, is stirring concerns over cybersecurity vulnerabilities. The debate centers on balancing innovation with safety.

Quantum computing might eventually crack data encryption, but artificial intelligence is already setting the stage for potential cybersecurity chaos. Anthropic's new AI model, Claude Mythos, highlights this risk. The company's decision to withhold this model from public release underscores the dilemma: How do we harness AI's potential without compromising digital security?

Anthropic's Dilemma: Innovation vs. Security

Anthropic, a prominent player in AI research, recently unveiled Claude Mythos, a model with remarkable capabilities in identifying cybersecurity vulnerabilities. However, the company won't be releasing it to the public. Why? The threat of cyberattackers exploiting such a tool is too significant. In practice, this decision signals a growing recognition in the AI community of the unintended consequences their creations might cause.

Think about it: AI models could potentially reveal security flaws faster than we can patch them. Enterprises are already grappling with digital transformation challenges and the gap between pilot and production is where most fail. So, does the advent of an AI that could expose vulnerabilities at scale help or hinder?

Enterprises Caught in AI Chaos

For businesses, the rise of advanced AI models like Claude Mythos represents both an opportunity and a threat. Enterprises don't buy AI. They buy outcomes. Yet, with AI tools capable of uncovering security gaps, companies may find themselves in a paradoxical situation, benefiting from AI insights while simultaneously being at risk. The ROI case requires specifics, not slogans.

As organizations seek to integrate AI into their workflows, change management becomes turning point. Here’s what the deployment actually looks like: rigorous oversight, meticulous testing, and a reliable response strategy to potential cybersecurity threats. If the deployment of AI models like Claude Mythos is to be beneficial rather than harmful, enterprises need to be prepared to address these challenges head-on.

Balancing Innovation and Safety

Anthropic's cautious approach to Claude Mythos reflects a broader industry dilemma. How do we balance the incredible capabilities of AI with the potential risks they pose? The consulting deck says transformation. The P&L says different. The real cost of deploying advanced AI is measured in the ability to manage its risks effectively.

So, where does this leave us? Should AI development be throttled to mitigate potential risks, or should we trust in our ability to manage these new tools responsibly? The debate is far from settled. Yet one thing is clear: the stakes have never been higher in the quest to tame AI's potential chaos.

Get AI news in your inbox

Daily digest of what matters in AI.

Key Terms Explained

An AI safety company founded in 2021 by former OpenAI researchers, including Dario and Daniela Amodei.

The science of creating machines that can perform tasks requiring human-like intelligence — reasoning, learning, perception, language understanding, and decision-making.

Anthropic's family of AI assistants, including Claude Haiku, Sonnet, and Opus.