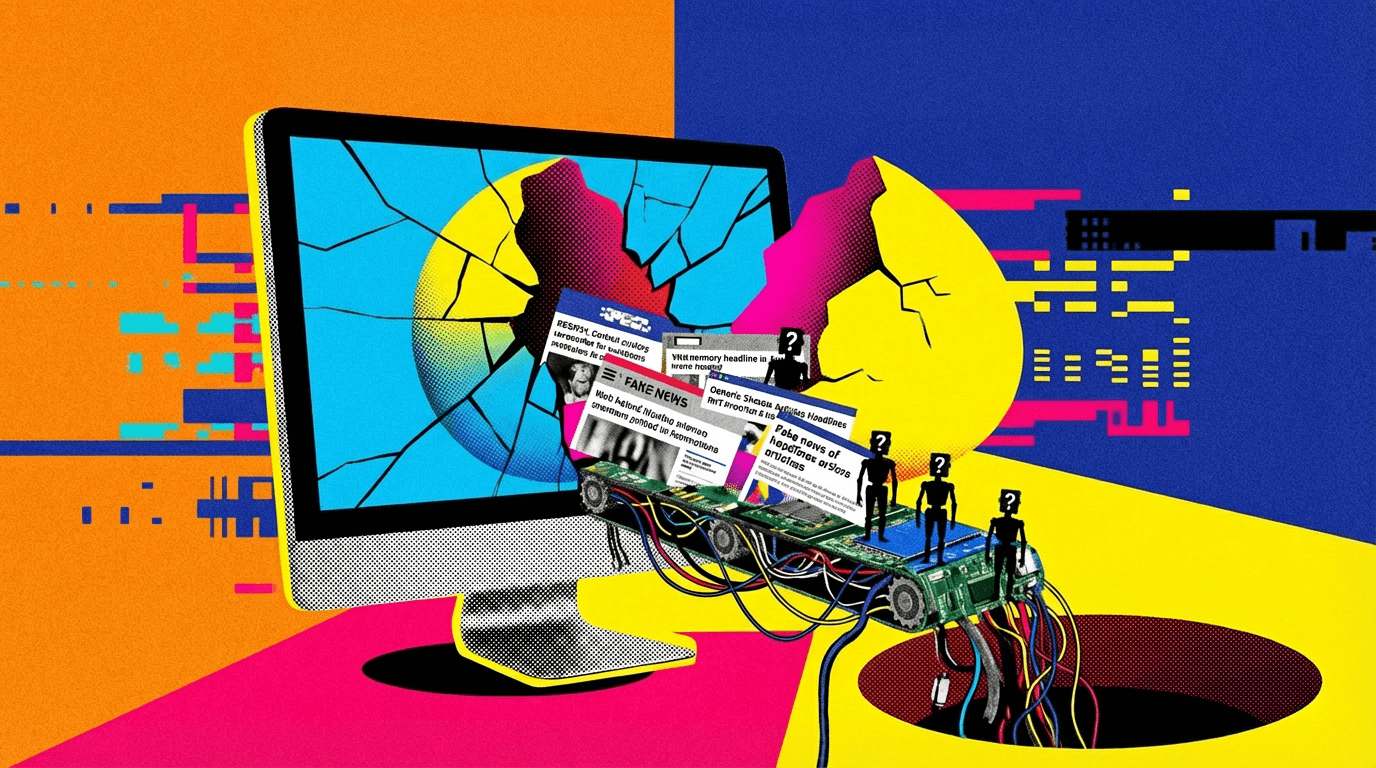

AI Content Farms: The New Epidemic in Online Misinformation

A new system identifies over 3,000 AI-driven sites spreading false info. What's the cost of unchecked AI-generated content?

The digital world is under siege by a rising tide of AI-generated misinformation. Newsguard and Pangram Labs have launched a real-time system designed to combat these so-called 'AI content farms.' Their efforts have already flagged over 3,000 such sites, with new ones appearing monthly. It's a relentless growth that's hard to ignore.

The AI Content Farm Boom

In the pursuit of clicks and ad revenue, these AI content farms churn out endless streams of articles, often rife with inaccuracies or fabricated data. It's not just about the quantity, it's about the erosion of trust in online information. How did we get here? Slapping a model on a GPU rental isn't a convergence thesis, but many seem to think it's.

With AI tools becoming more accessible, the barrier to create misleading content has all but vanished. The rapid proliferation of these sites highlights a critical question: If the AI can hold a wallet, who writes the risk model? The intersection is real. Ninety percent of the projects aren't worth the pixels they're displayed on, but that remaining ten percent can wreak havoc.

The Stakes for Online Truth

Why should readers care? Because misinformation isn't just a digital problem, it's a societal one. When false information spreads unchecked, it distorts public discourse and, eventually, impacts real-world decisions. Newsguard and Pangram Labs' initiative is a step in the right direction, but it's merely the beginning of a long battle. Show me the inference costs. Then we'll talk about sustainable solutions.

Decentralized compute sounds great until you benchmark the latency. The question is: can the industry innovate fast enough to outpace the cleverness of those who manipulate AI for malicious purposes? It's a race against time, and so far, we're losing.

Get AI news in your inbox

Daily digest of what matters in AI.