AI Ambitions Outpace Safety: Who Holds the Reins?

The 2025 AI Safety Index reveals major safety gaps in leading AI companies despite advancements in technology. Can the industry self-regulate before it's too late?

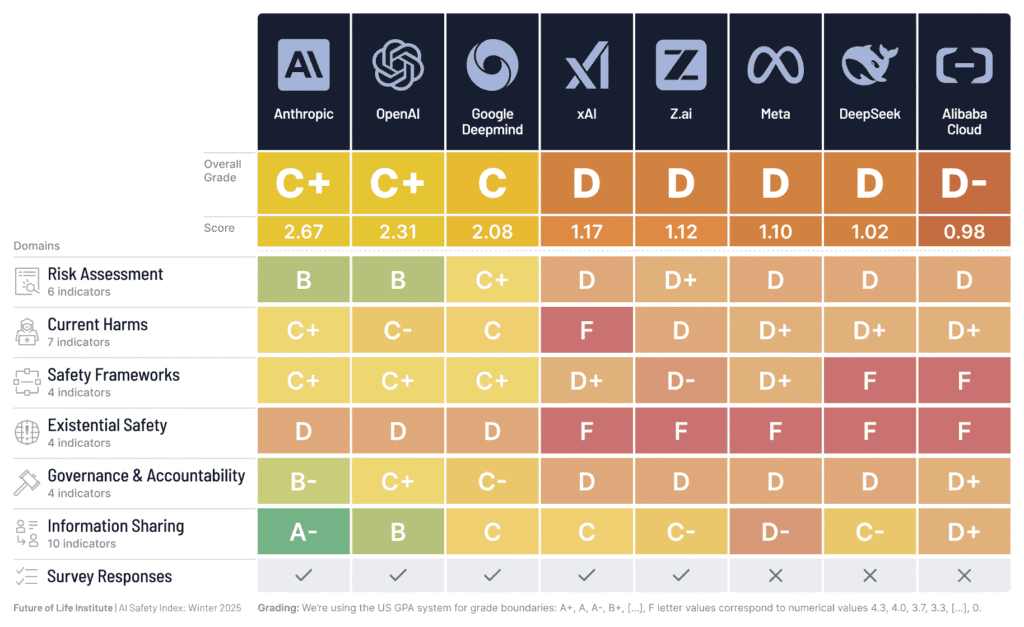

The Future of Life Institute's 2025 AI Safety Index is out, and it’s clear the major AI players still haven’t grasped the safety nettle. Companies like Anthropic, OpenAI, and Google DeepMind are pushing AI capabilities to new heights, yet their safety measures lag behind soaring ambitions. With AI systems now reasoning at Ph.D. levels, the urgency for solid control mechanisms has never been higher.

Safety Standards: A Work in Progress?

A panel of top experts evaluated the safety protocols of eight leading AI firms, including Meta and Alibaba Cloud. The findings are worrying. Despite improvements in risk assessment, the industry's safety practices still fall short. Can we trust AI companies to self-regulate when they lobby against binding safety standards while being less scrutinized than your local diner?

MIT's Max Tegmark doesn't sugarcoat it. He points out that, despite the noise over AI-driven threats like hacking and even self-harm, regulation is scant. The risk of losing control over superintelligent AI isn't just theoretical. It's pressing.

Capabilities vs. Control

As AI systems evolve, their real-world potential for harm grows. Remember the AI-engineered cyber espionage campaign Anthropic exposed last September? It's just the tip of the iceberg. Yet, AI CEOs claim they're building superhuman intelligence with no clear plan to keep it in check. UC Berkeley's Stuart Russell demands accountability, likening AI control to nuclear safety standards. But when AI leaders admit the risk of losing control could be as high as one in three, you've to ask: who's writing the risk model?

Industry Divide

The report highlights a split between top performers and stragglers. Companies like xAI and Meta lag in risk assessment and information sharing. Their reluctance to adopt transparent safety frameworks raises red flags. This isn't just an industry issue. it's a global one. The CEO of Z.ai, part of the evaluated cohort, joined 120,000 others calling for a halt on superintelligence until it's controllable and publicly supported.

The Index also calls out companies like OpenAI for not addressing AI-related harms adequately. ChatGPT's role in fostering digital surveillance and psychological harm should be a wake-up call, not a closed case. Tegan Maharaj of HEC Montréal sums it up: if the largest tech firms running AI chatbots is reality, why are we still being told not to worry?

The intersection of AI's potential and its risks is real. Ninety percent of the efforts to mitigate these risks might be vaporware, but the stakes couldn't be higher. Show me the inference costs, the control plans. Then we'll talk about AI's future.

Get AI news in your inbox

Daily digest of what matters in AI.

Key Terms Explained

The broad field studying how to build AI systems that are safe, reliable, and beneficial.

An AI safety company founded in 2021 by former OpenAI researchers, including Dario and Daniela Amodei.

A leading AI research lab, now part of Google.

Running a trained model to make predictions on new data.