AI Accountability: When Should AI Labs Be Held Liable?

OpenAI supports an Illinois bill limiting AI liability, sparking debate on accountability in tech. What's the real impact on the industry?

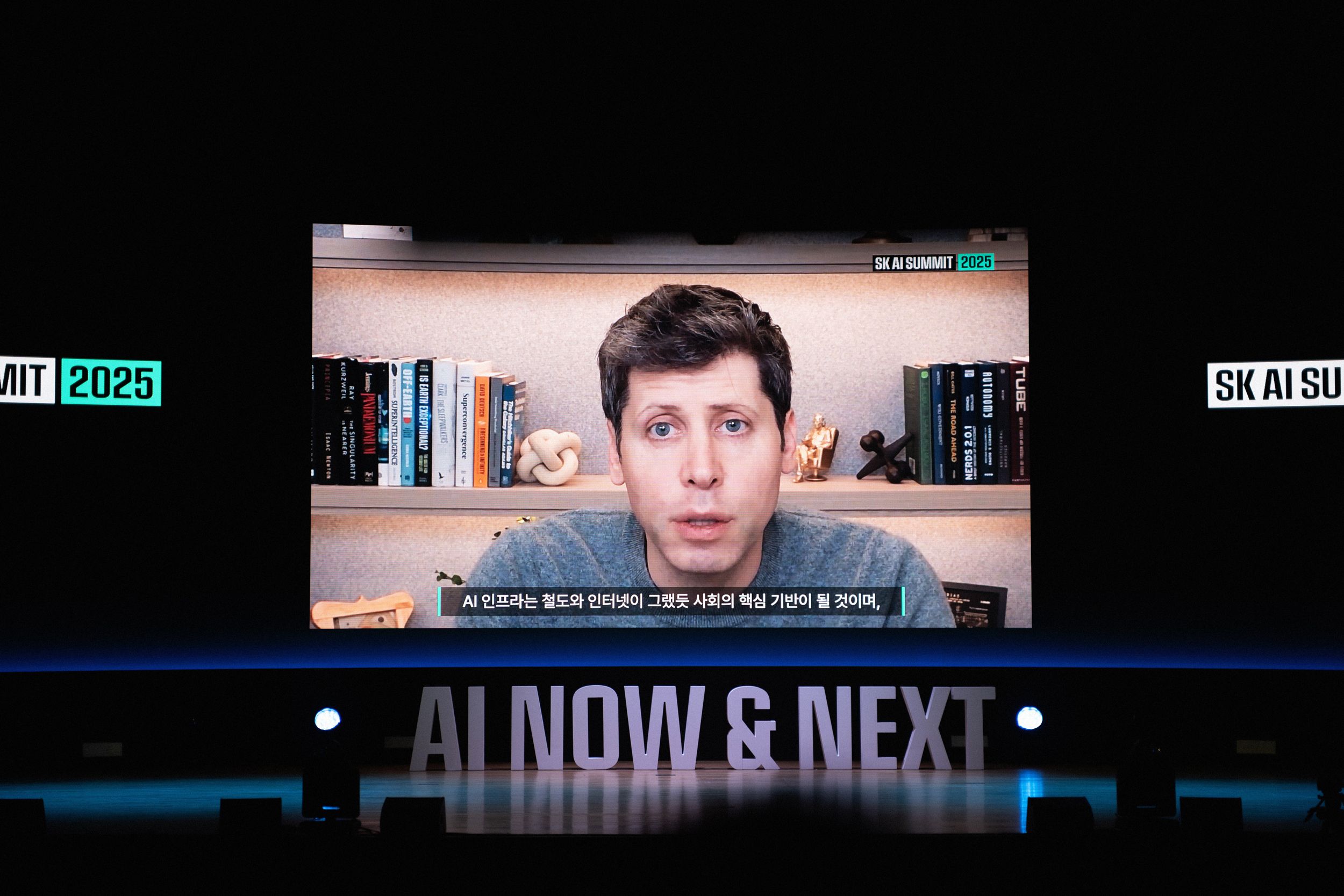

OpenAI, the minds behind ChatGPT, recently threw their weight behind an Illinois bill that could change how AI companies handle liability. The proposed legislation aims to limit when AI labs can be held responsible, even in cases where their innovations cause significant harm.

The AI Liability Debate

AI's rapid development has everyone talking about responsibility. If AI screws up, who's on the hook? The Illinois bill wants to clear the fog, suggesting AI creators shouldn't always be liable for how their products are used, even if the outcome is a mess.

But here's a thought: if a self-driving car crashes, do we blame the maker or the driver? The bill says it depends. This could set a precedent, as more states grapple with AI's potential for both innovation and error.

Why Should You Care?

AI's in our lives more than ever, from chatbots to autonomous vehicles. It raises a essential question: if these systems fail, who pays the price? The Illinois bill could influence national policy, shaping how tech companies approach AI development and accountability.

For startups and tech giants alike, this is a big deal. Liability matters for insurance, investment, and ultimately, innovation. While the pitch deck might look rosy, real-world impact is what counts.

Industry Impact

I've been in that room. Here's what they're not saying: deregulating AI accountability could fast-track development but at what cost to safety? Reduced liability might embolden risk-taking, but it could also lead to disaster. Is that a risk worth taking?

The founder story is interesting, but the metrics are more interesting. If AI firms aren't held accountable, will they cut corners? Or will this freedom boost creativity and competition? It's a delicate balance that could reshape the industry.

In the end, AI's potential is enormous, but so is its capacity for error. This bill's outcome could be a bellwether for how we balance innovation with responsibility. And as always, what matters is whether anyone's actually using this responsibly.

Get AI news in your inbox

Daily digest of what matters in AI.