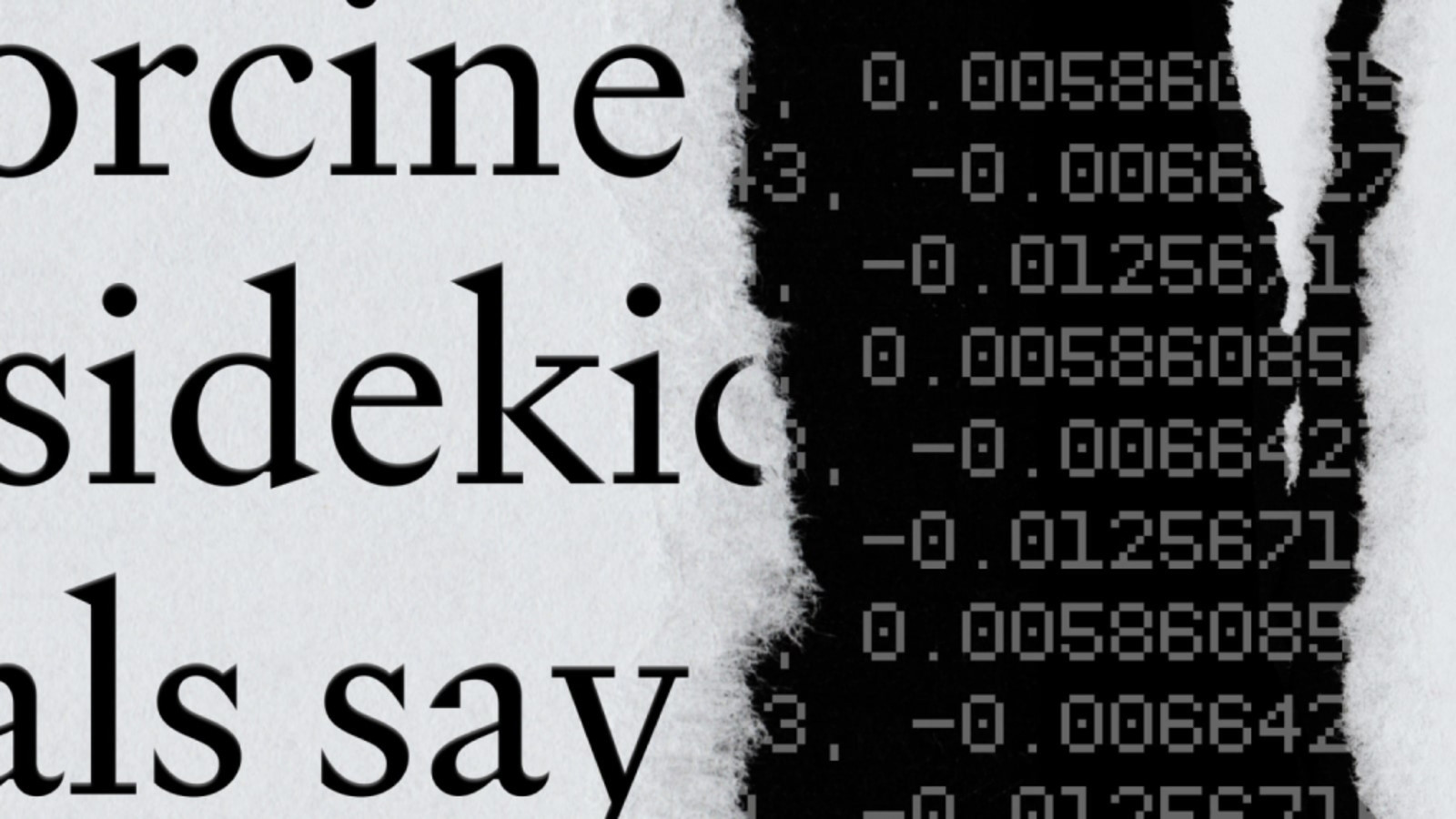

AI, trust isn't a feature you can bolt on later. It's foundational. OpenAI's latest initiative aims to reinforce this by enlisting public support to ensure that their AI systems remain safe and trustworthy. But why is this drive for collaborative assurance so important now?

The Need for Trustworthy AI

We're in an era where AI's capabilities are rapidly expanding, and with them, the potential risks. As AI systems become more integrated into critical sectors like healthcare and finance, the stakes are too high for any missteps. So, OpenAI's call to action isn't just about testing algorithms. It's a mission to build systems where safety and reliability are as integral as the code they run on.

But let's throw a curveball here. Can a company alone define what 'safe AI' truly means? Or should we consider broader societal involvement when setting these safety standards?

Community Involvement Key

OpenAI recognizes this challenge and is actively seeking public engagement. This isn't about outsourcing responsibility. It's a strategic move to gather diverse perspectives, ensuring that these AI systems are scrutinized from all angles. Think of it as an open-source model but for ethics and safety in AI.

But there's another layer. Engaging the public doesn't just enhance transparency. It builds a shared sense of accountability. When citizens play a role in shaping AI, the technology becomes less of a mysterious black box and more of a collective achievement.

The Bigger Picture

This isn't a partnership announcement. It's a convergence of technology and public interest. If AI is to truly benefit society, it must do so with society's input. The AI-AI Venn diagram is getting thicker, as safety protocols and public trust become inseparable from technological advancements.

So, the real question isn't just about developing safe AI. It's about who gets to decide what safe means. OpenAI's approach hints at a future where AI isn't solely controlled by a handful of tech giants but shaped by the people it impacts.